Evolving technologies, including the smart grid, can provide electric power utilities with unprecedented capabilities for forecasting demand, shaping customer usage patterns, preventing outages, optimizing unit commitment and more. At the same time, these advances also generate unprecedented data volume, speed and complexity. One aspect of the smart grid evolution is the omnipresence of communications and information technologies (IT) to have better knowledge of the state of the grid and to make more efficient decisions.

To manage and use this information to gain insight, utilities such as Hydro-Québec must be capable of high-volume data management and advanced analytics to transform data into actionable insights.

When thinking about the smart grid, it is far from obvious the electric utility industry has all the answers on what IT architecture will support it. Even before the smart grid, utilities were struggling with IT challenges. But the smart grid brings the big-data dimension, which can make things even more challenging.

More and More Data

Big data is known as the four V’s. It is not only about massive amounts of data represented as volume, it is also velocity, variety and veracity. Velocity is the speed at which utilities get the data. A phasor measurement unit is a good example. Variety is the heterogeneity of the different sources of data. The last dimension of big data, but not the least, is veracity. The veracity of the data is about its accuracy and truthfulness. Improving the veracity of data requires minimizing the occurrence of different sources of errors. These sources are related to inconsistencies, duplication and missing data.

In a recent survey, IBM found one in three business leaders do not trust the information they use to make decisions. Gartner research shows that poor data quality is cited as the No. 1 reason for overrunning project costs. According to The Data Warehousing Institute, the cost of bad, or dirty, data exceeds US$600 billion for U.S. businesses annually. In an infographic, InsightSquared stated the following:

- Data quality best practices can boost revenue by 66%.

- Poor data quality across business and government costs the U.S. economy $3.1 trillion a year (insidearm.com).

- Data quality is a barrier for adopting business intelligence/analytics products for 46% of survey respondents.

Electric power utilities need accurate data and cross-sectional information to make valuable business decisions. Building an enterprisewide unified information view is a complex task because of the heterogeneity and lack of consistency in the different sources. Still, decision makers need only one version of the truth. How can common ground be reached?

Integrating all Forms of Information

Exchanging information between systems is a lot like communication between two individuals using different phones. Many layers of interoperability are involved. First, a technical level of interoperability brings the information from one system to another. A lot of IT solutions cover this area; an integration bus (ESB) is one of them. For many utilities, reaching technical interoperability is not a major issue. In comparison, the phone has perfect technical interoperability. One can call anybody, anywhere, using any kind of device, and it will work.

But is that sufficient? If the two people do not speak the same language, there will be no information exchange. The same concept applies to systems. To exchange information between applications, more than technical interoperability is needed; semantic interoperability is needed. Semantic interoperability is composed of a common language called ontology and semantic technologies.

Electric power utilities have one of the most complete ontology: the International Electrotechnical Commission (IEC) common information model (CIM). The IEC CIM is defined through a set of IEC international standards, mainly 61970-301 and 61968-11. The first version was standardized in 2003 and now contains more than a thousand concepts covering generation, transmission and distribution.

In addition to ontology, technologies also are well suited to address the semantic interoperability layer. These are the semantic technologies, a complete set of World Wide Web Consortium (W3C) standards well supported by software vendors (for example, Oracle Spatial and Graph or IBM DB2).

CIM, the Obvious Choice

Like any other ontology, the CIM ontology is not perfect. In fact, ontologies, just like human languages, are living artifacts subject to change. The CIM is not an exception. Human communities are bounded by what they can talk about and how they can talk about it. When new experiences exceed current vocabularies, languages solve the problem by developing new words and grammar. It is exactly the same process with ontologies like the CIM, which give the definition of the basic concepts in the electric domain and the relations among them. They have to evolve iteratively to meet the evolution of the domain.

Even if the use of a common metadata layer like the CIM presents a significant opportunity to overcome the semantic barriers between existing information silos, it is not without its own set of challenges. Some aspects of conventional technologies like relational databases may be insufficient to meet the emerging challenges implied by the use and evolution of the CIM. Semantic technologies, with their graph structures and the ability to capture semantic differences and meanings, are well suited for the CIM. To fully take advantage of the CIM, it must be used in combination with the right tool. It is just like trying to use a hammer when, really, a saw or screwdriver is needed.

Leveraging, Not Replacing, Assets

As more smart grid technologies are deployed across the grid, it will require more and better data to enable its full potential. The Electric Power Research Institute (EPRI) contends the intelligence of the smart grid relies critically on the quality of the data. In fact, whether the data is big or small, static or moving, structured or unstructured, data quality is always a critical dimension to consider. Ways must be found to improve and maintain the level of data quality the smart grid requires.

At the same time, existing utility systems represent massive sunk costs, legacy knowledge and expertise. There is a need to preserve prior investments in knowledge, information and IT assets while improving data quality.

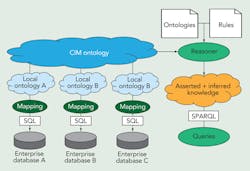

To leverage the value already embodied in existing systems and improve the veracity of the data, Institut de recherche d’Hydro-Québec (IREQ) researchers use semantic technologies and CIM as a thin layer placed over existing enterprise systems. The benefit of such an architecture includes enabling reasoning over multiple enterprise systems, or breaking legacy silos by describing semantic relationships between each enterprise data source. Gartner has documented how technology islands and silos can be a potential source of inconsistencies and conflicting information for utilities.

Another benefit of this approach, existing systems can continue to provide the functionality for which they were originally designed and deployed. At the same time, it can quickly highlight inconsistencies across multiple enterprise data sources.

Getting the Most Out of Big Data

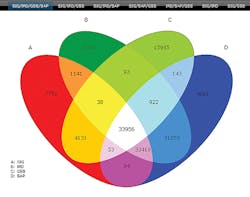

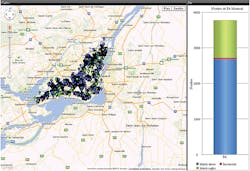

In addition to giving an overview of inconsistencies across multiple enterprise systems, the semantic approach proposed by IREQ goes well beyond that. It can use rules to capture business logic, enrich data with new knowledge or improve the data quality. For example, if one only considers the manhole underground structures on the Island of Montreal for two enterprise data sources, the system can automatically improve the consistency of the data going from 70% of consistency up to 95% of consistency. This is done just by the addition of a few logic rules without any modification or change to the source code of the genuine enterprise systems.

Data mining is the process of analyzing and turning large collections of data into useful knowledge. It can be seen as a natural evolution of information technology, where huge volumes of data accumulated through databases are analyzed, classified and characterized over time. To improve the efficiency of mining results, raw data has to be preprocessed by aggregating data, removing contradictions and enhancing information.

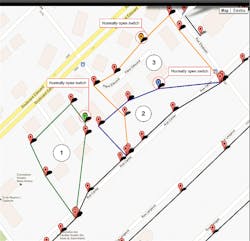

The use of semantic technologies and CIM modeling can reduce the preprocessing effort significantly. In fact, when heterogeneous data sources use a commonly shared ontology, it becomes possible to align and merge data automatically. In addition, the use of a common semantic enables additional valuable information and knowledge to be inferred and extracted. In IREQ’s case, the utility has been able to extract complex patterns as padmounted power transformer rings. The utility’s experience has proven that the combination of ontologies and semantic technologies brings an advantage in preprocessing and enhancing data.

Semantic interoperability ensures the good understanding and interpretation of the information exchanged between systems. The number of databases and information systems in use by utilities reveals the importance of disposing a common language and semantic. This is particularly true for electric power utilities where information is growing fast and will continue to increase because of the introduction of smart technologies. Nowadays, everybody is trying to get the smart grid. How smart can it be with bad data?

Acknowledgement

The work described in this article is the result of a team effort and many people at IREQ played a major part in this accomplishment. The total number of contributors cannot be mentioned; however, the efforts of Arnaud Zinflou, Mohamed Gaha and Alexandre Bouffard were exemplary.

Mathieu Viau ([email protected]) is a computer engineer that graduated in 2002. He worked at the Hydro-Québec’s Transmission control center for five years, where he was responsible of the IT integration and architecture. Since 2008, he has been working at the Hydro-Québec R&D division, were he is leading the semantic interoperability work.

Companies mentioned:

Data Warehousing Institute | http://tdwi.org/Home.aspx

EPRI | www.epri.com

Gartner | www.gartner.com

GridWise Architecture Council | www.gridwiseac.org

InsightSquared | www-new.insightsquared.com

IREQ | www.hydroquebec.com/innovation/fr/index.html

W3C | www.w3.org